Everybody uses Del.icio.us, right? It’s a great idea – moving bookmark management out of the browser and onto the web makes bookmarking far more flexible. It builds on that improvement by adding a social aspect, letting you watch patterns of linking in a way that would otherwise be impossible.

It’s also open. You have ready access to all your data, an application of the “let me go back” approach described by Joel Spolsky. (which makes things even more interesting for De.lirio.us, an open-source Del.icio.us clone).

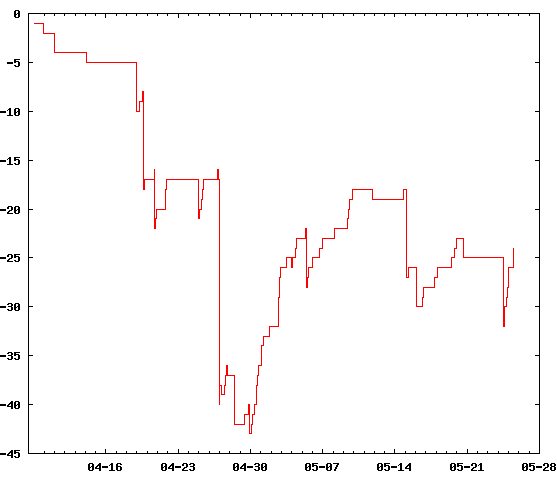

Given any large body of timed data, it’s tempting to impose a rough stock market analogy, run it and chart the results: if a URL’s “price” is the number of users who have bookmarked it, how well does your choice of links predict mass behaviour? Are you a trend leader, or behind the curve?

This is just cursory analysis, so minus twenty-five could easily be really, really good. Under the model I used (making a link costs one unit for each user ahead of you, including Delicious itself; income is received for every user who jumps on after you), I’ve made a net loss. The reason should be pretty obvious – a couple of links that I found well after everyone else, with no subsequent activity. Still, you have to speculate to accumulate.

One major problem is that the RDF available on the site only covers the last thirty users to bookmark a link. My approach was to ignore anything that overran, rather than try to scrape the HTML. It would be great to have an extra ‘maxcount’ parameter on requests here.

The RDF also has a few issues. The RSS 1.0 feed for a URL uses the same identifier (the URL itself) for each item. Semantically, it’s claiming that http://www.example.com/ was created by testuser, rather than saying that testuser created a link to that site. Subtle, but important. That also means it’s impossible to distinguish when each user linked, and that multiple links at the same time are coalesced. There are the expected character encoding issues – it seems like the site may be trusting clients a little too much.

I’m using Daniel Krech’s RDFLib and Gnuplot.py; source available.